EU AI Act risk categories explained

EU AI Act Risk Classification

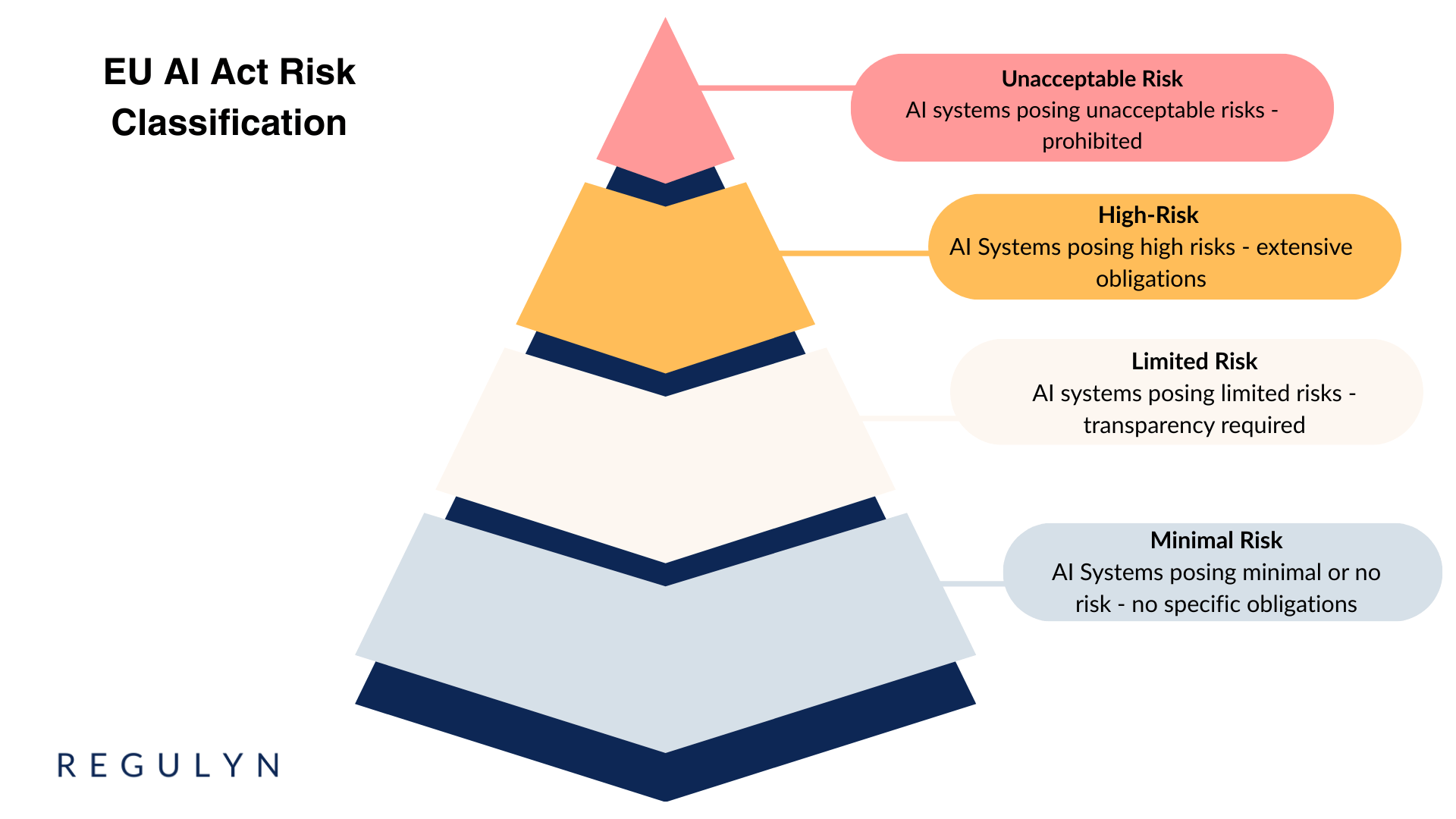

The EU Artificial Intelligence Act (“EU AI Act”) is the first comprehensive legal framework governing AI systems. It introduces a risk-based approach, classifying AI systems into four categories. This tiered approach ensures that regulatory burden matches the potential harm an AI system could cause.

Understanding these classifications is essential for organizations operating AI systems in the European market.

Prohibited AI Systems (Unacceptable Risk)

Certain AI practices are entirely banned within the EU due to unacceptable risks to fundamental rights and safety.

Prohibited practices include:

Subliminal manipulation techniques

Exploitation of vulnerabilities (related to age, disability, or socio-economic status)

Social scoring

Real-time remote biometric identification (narrow exceptions apply)

Biometric categorization using sensitive attributes (race, political opinions, sexual orientation)

Emotion recognition in workplace and educational settings

Untargeted scraping of facial images (from internet or CCTV)

Specific use cases in law enforcement (limited exceptions exist)

The prohibition applies both to the intended purpose and the actual effect of an AI system. An AI system producing manipulative or exploitative effects falls under the prohibition even where such outcomes were unintended.

Compliance consideration: Organizations must ensure prohibited AI systems are not placed on the market, put into service, or used within EU territory. The ban applies regardless of where the system was developed.

Example: A multinational corporation cannot deploy emotion recognition technology to assess employee engagement, motivation, or dissatisfaction in its EU offices, even if the same system operates legally in non-EU locations.

High-Risk AI Systems

High-risk AI systems face the most extensive regulatory requirements under the EU AI Act. Two pathways lead to high-risk classification.

First pathway: AI systems serving as safety components of products covered by EU harmonization legislation (such as medical devices, machinery, or aviation equipment), or products themselves subject to such legislation.

Second pathway: AI systems operating in specific application areas listed in Annex III:

Biometric identification and categorization

Management and operation of critical infrastructure

Education and vocational training (determining access, assessing learning outcomes)

Employment, worker management, and access to self-employment (recruitment, promotion, contract termination)

Access to essential private services and public assistance benefits

Law enforcement (risk assessments, evaluation of evidence reliability)

Migration, asylum, and border control management

Administration of justice and democratic processes

De minimis exception: Annex III systems avoid high-risk classification when they perform narrow procedural tasks, improve human activity results, or detect decision-making patterns—provided they do not replace human assessment and do not materially influence outcomes.

Compliance obligations for high-risk systems include:

Comprehensive risk management system

Data governance and management practices

Technical documentation

Automatic logging capabilities

Transparency and information provision

Human oversight measures

Accuracy, robustness, and cybersecurity requirements

Quality management system

Registration in EU database

Post-market monitoring

Incident reporting obligations

Obligations fall primarily on providers (those placing systems on the market or putting them into service). Deployers face specific requirements including human oversight implementation, input data monitoring, use according to instructions, and registration duties.

Examples:

Recruitment AI: An organization uses AI to screen CVs, rank candidates based on predicted performance, or recommend hiring decisions. This qualifies as high-risk employment AI requiring comprehensive documentation, risk management, and human oversight.

Medical device AI: A healthcare provider implements AI software analyzing real-time patient conversations to assess depression or anxiety severity. The system likely falls under both EU AI Act high-risk requirements (as safety component software) and Medical Device Regulation obligations, requiring dual compliance.

Compliance consideration: Organizations often benefit from structured legal support when implementing high-risk AI systems given the breadth of obligations and potential penalties for non-compliance (up to €35 million or 7% of global annual turnover).

Limited-Risk AI Systems

Limited-risk AI systems trigger primarily transparency obligations under Chapter IV of the EU AI Act. While not explicitly defined as a risk category, these systems typically involve human interaction or synthetic content generation.

Transparency requirements apply when:

AI systems interact directly with natural persons (Article 50)

AI generates or manipulates image, audio, or video content (deepfakes)

AI generates or manipulates text for public information purposes

Provider obligations include:

Disclosing AI interaction to users (chatbots, virtual assistants)

Labeling AI-generated or manipulated content (synthetic media)

Designing systems to enable deployers to meet their transparency obligations

Deployer obligations include:

Informing natural persons when emotion recognition or biometric categorization AI processes their data

Disclosing deepfakes and AI-manipulated text published to inform the public (exceptions for authorized detection activities, content clearly labeled as parody/satire)

Example: A government agency implements an AI chatbot on its public website to help citizens navigate services and locate information. The chatbot must clearly disclose to users that they are interacting with an AI system rather than a human agent.

Compliance consideration: Transparency obligations may also arise under other regulations, particularly GDPR Article 13-14 (information to data subjects) and Article 22 (automated decision-making). Organizations should ensure coordinated compliance across applicable frameworks.

Minimal-Risk AI Systems

Some AI applications fall into the minimal-risk category, facing no specific obligations under the EU AI Act. These systems pose no risk to safety, health, or fundamental rights.

The Act encourages but does not mandate voluntary codes of conduct for minimal-risk AI. Organizations may adopt such codes to demonstrate responsible AI practices and align with broader governance expectations.

Examples:

Spam filtering: Email systems using AI to detect and categorize spam messages

Video enhancement: Creative software applying AI for color correction, resolution enhancement, or editing assistance

Compliance consideration: While the EU AI Act imposes no specific requirements, minimal-risk AI remains subject to other applicable regulation. GDPR requirements apply where personal data processing occurs. Sector-specific rules may impose additional obligations depending on the application context.

Classification Methodology

Determining correct risk classification requires systematic analysis.

Prohibited use check: Does the system or its effects fall within Article 5 prohibitions?

Harmonized legislation assessment: Does the AI system serve as a safety component for products under EU harmonization legislation?

Annex III evaluation: Does the system operate in a listed high-risk application area? If yes, does the de minimis exception apply?

Transparency trigger analysis: Does the system interact with humans or generate synthetic content?

Compliance consideration: Classification is not always straightforward, particularly for general-purpose AI systems, AI components within larger products, or systems with multiple functions. Organizations facing classification uncertainty benefit from legal assessment early in development or deployment planning.

Timeline for Compliance

The EU AI Act entered into force on 1 August 2024 with staggered compliance deadlines:

2 August 2024: Prohibition of unacceptable risk AI systems

2 February 2025: Obligations for general-purpose AI models

2 August 2025: Transparency obligations

2 August 2026: High-risk AI system requirements (providers and deployers)

2 August 2027: High-risk AI systems qualifying as safety components under harmonized legislation

Organizations should begin compliance activities well before applicable deadlines, particularly for high-risk systems requiring substantial governance implementation.

Practical Next Steps

Organizations operating AI systems in the EU market should prioritize:

System inventory: Document all AI systems in use or development, including purpose, data sources, and deployment contexts

Classification assessment: Apply the risk-based framework to each identified system

Obligation mapping: Identify specific compliance requirements for each classified system

Gap analysis: Assess current practices against required documentation, technical measures, and governance structures

Implementation planning: Develop timelines and resource allocation for achieving compliance

Organizations with high-risk AI systems face the most substantial compliance burden and should consider structured legal support to ensure comprehensive implementation of required controls.

This article is part of Regulyn’s Knowledge Center.

Regulyn offers specialized counsel and fixed-scope engagements for EU AI Act Compliance.